Multi-Agent Simulation Platform

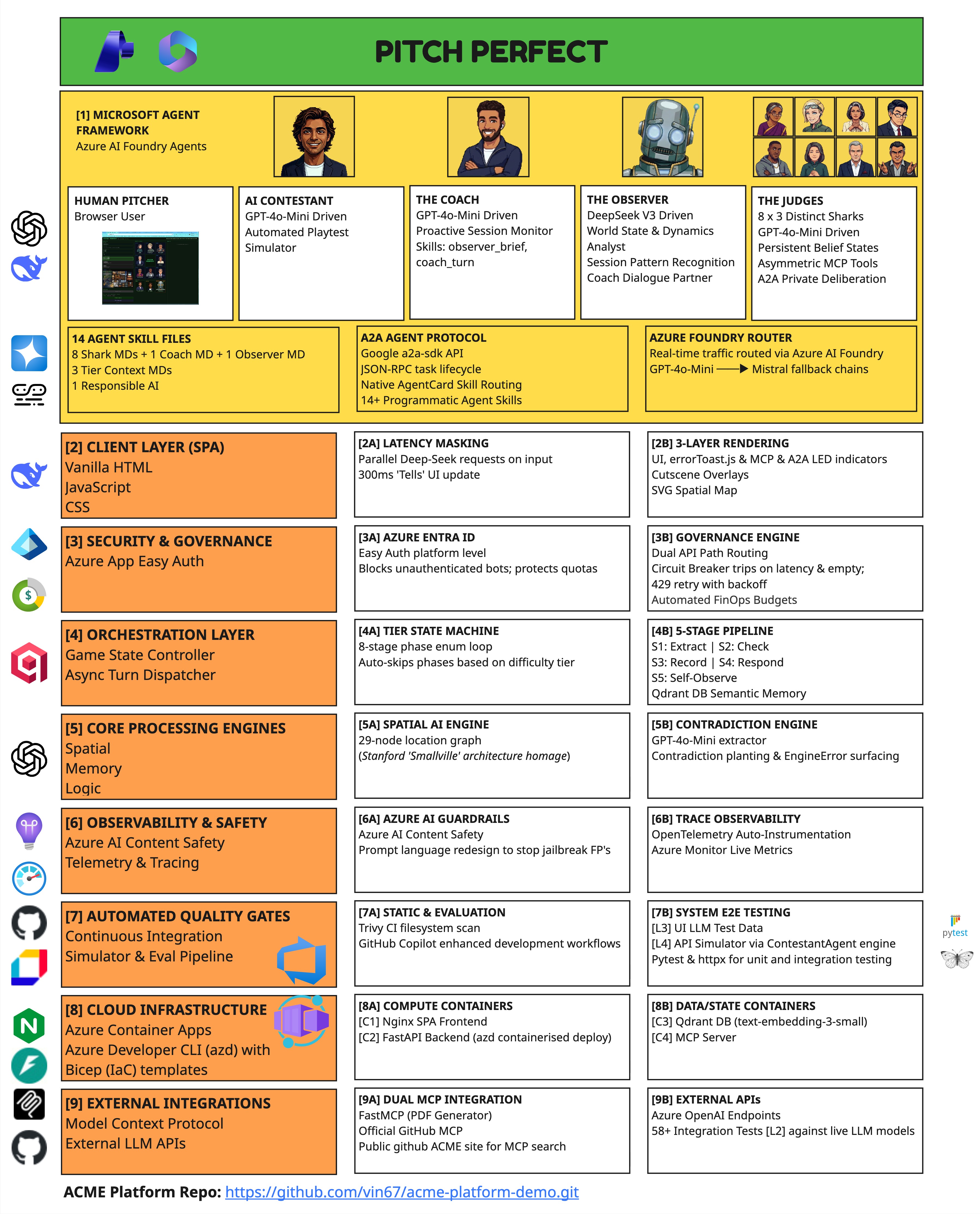

Pitch Perfect — AI Shark Tank Simulator on Azure

The Problem

AI is disrupting established roles faster than education can respond. The ability to make a compelling case for an idea is becoming a core survival skill. Yet there is nowhere safe to practise — no mock panel remembers what you said ten minutes ago, and no AI tool challenges whether your story holds together under pressure.

The Deeper Problem

The most important work ahead happens in the space between human judgement and machine capability — genuine collaboration where each does what it does best. The capability that survives an uncertain future is not a credential. It is the ability to stand in any room, at any stakes, and make your case with clarity, consistency, and conviction.

The Solution

Pitch Perfect is a multi-agent simulation platform where autonomous AI agents with distinct personalities, persistent memory, and specialist tools challenge you across a full session. They extract every claim, score it by importance, and check it against everything you've said — even phases ago. Pitch Perfect is the demo. The simulation platform is the product.

In an uncertain, cynical world...

Every claim remembered.

Every contradiction caught.

Most AI agents help you.

These challenge you.

They remember why.

These agents don't just know what you said.

They know when they stopped believing you.

Train against AI that won't let you hide.

The Engineering Challenge

Pitch Perfect is an AI Shark Tank simulator — you pitch your startup idea to a panel of AI sharks who remember everything you say, catch your contradictions, and decide whether to invest. Each shark has distinct expertise, personality, and tolerance thresholds. A coach agent guides you through the experience.

I built the whole platform locally first using Microsoft Foundry containers with smaller models like Phi-4-mini — fast iteration, no cloud costs during development. Once the architecture held up, I deployed the same containers to Azure Container Apps with larger models for the hackathon demo.

The result: 8 autonomous shark agents plus a coach, maintaining persistent semantic memory, each independently deciding what to say, recalling relevant context from earlier phases, and detecting when a pitcher contradicts something they said 20 turns ago — all running through a 5-stage async pipeline with 11 model deployments across Azure AI Foundry.

Azure Architecture Overview

Azure OpenAI Service

11 model deployments with purpose-driven routing and 2 platform-managed model routers for non-latency-critical paths. Each task type routes to the optimal model for cost, latency, and quality.

- GPT-4o-Mini — Primary agent reasoning (sharks, coach, extraction)

- GPT-5-Mini — Enhanced reasoning path

- DeepSeek-V3 — Observer synthesis, batch tells

- Phi-4-Mini — Lightweight claim extraction

- Phi-4-Reasoning — Structured evaluation

- Mistral-Small — Fast classification, single tells

- Grok-3 / Grok-3-Mini — Advisory analysis

- text-embedding-3-small — 1536-dim embeddings

- 2 Model Routers — Platform-managed failover

Azure AI Foundry

Microsoft Agent Framework provides persistent agents with per-session threads and tool call observability.

Runs on local Docker containers for development. Also deploys to Azure via Azure Container Apps for production.

Azure Container Apps

Five Docker Compose services deploy identically to cloud via azd up.

Zero architecture changes between local development and Azure deployment. Same containers, same networking, same volumes.

MCP Servers

Two MCP microservices: the analysis server with 5 tools and PDF report generation, plus a GitHub MCP server for repository integration.

Generates session summaries, consistency analysis, improvement plans, feedback reports, and exportable PDF documents via REST API.

GovernedOpenAIClient — Single Gateway

Every LLM call in the platform flows through a single governed client. This is the architectural choke point by design — one place to add logging, one place to swap models, one place to enforce budgets.

Traffic Governance

- •Per-session request budgets with graceful exhaustion

- •Token tracking across all model calls

- •Model routing based on agent requirements

- •Content Safety SDK pre-check before LLM calls

Resilience

- •Fallback chain: GPT-5-Mini → GPT-4o-Mini → Mistral-Small → DeepSeek-V3

- •2 Model Router deployments for non-latency-critical paths

- •Exponential backoff retry: 5s → 10s → 15s (max 3 attempts)

- •Zero 500 errors reach the user — ever

Dual API Paths

Dynamically routes between Azure OpenAI's Responses API (primary) and Chat Completions (fallback for non-OpenAI Foundry models). One client handles both API surfaces transparently.

Azure RAI Content Safety

Content Safety SDK pre-check validates prompts before LLM submission. Solved real Azure AI jailbreak filter false-positives by redesigning prompt language and building slim message construction.

“In production, you need exactly one place to add logging, one place to swap models. If every agent calls Azure OpenAI directly, you've lost control.”

Memory Architecture — Stanford Generative Agents + Qdrant

Stanford Three-Factor Retrieval

score = 0.5 × recency + 3.0 × relevance + 2.0 × importanceCosine similarity — semantic match is the primary retrieval signal

Ensures inconsistencies (importance=1.0) always surface in recall

Low weight — early-phase assertions must remain accessible later

Single-Collection Design

One Qdrant collection (memories) with per-agent payload filters. Scales cleanly without creating N collections per session. Agent isolation enforced at the storage layer via session ID + agent ID filtering.

“I used ChromaDB at GovHack 2024. For persistent multi-agent memory with session scoping, Qdrant was the better tool — metadata filtering, persistent collections, and proper payload indexing out of the box.”

Cross-Phase Inconsistency Detection

Phi-4-mini extracts structured claims from natural language (~150 tokens)

Claims upserted into ClaimTable with topic, value, phase, turn

New claims checked against all prior claims on the same topic

Agent surfaces inconsistency in character, referencing original value

Lightweight Extraction

Phi-4-mini handles claim extraction at ~150 tokens per call. No need for GPT-4o on a structured extraction task — use the smallest model that gets the job done.

Difficulty-Gated Sensitivity

Inconsistency thresholds vary by difficulty tier. Easy mode catches only major discrepancies. Hard mode flags even minor deviations — the system adapts its scrutiny to the scenario.

“The part I'm most proud of is the consistency layer. The system remembers everything you said — and if you contradict yourself twenty turns later, it notices.”

5-Stage Async Orchestration Pipeline

asyncio.gather Parallelism

Stages are strictly sequenced where dependencies exist — claims must be extracted before inconsistency checks — but maximally parallel within each stage. 3+ simultaneous LLM calls complete in under 5 seconds.

ContextVar Isolation

Each async task runs in its own context. Session state, memory streams, and claim tables are task-scoped — preventing cross-agent data leakage during parallel execution.

“Without context isolation, Agent 3's state leaks into Agent 5's reasoning. ContextVar gives you task-scoped state without passing context objects through every function signature.”

Multi-Phase Session Orchestration

Beyond the per-turn pipeline, sessions progress through up to 8 distinct phases. A while-loop in advance_phase() skips tier-inappropriate phases automatically — tier rules live in a data dict, not scattered conditionals.

| Phase | Handler | Description |

|---|---|---|

| Meet Coach | Advisory | Coach introduces the session, learns about your pitch |

| Green Room | 1-on-1 | 1-on-1 shark interviews before the main pitch |

| Prep | Advisory | Coach gives prep advice based on shark impressions |

| Tank | Multi-agent | Multi-shark pitch — parallel agents respond each turn |

| Judge Confer | Auto-trigger | Sharks confer privately (Pitch Competition tier only) |

| Negotiation | Multi-agent | Interactive offers (Angel Round / Series A tiers only) |

| Debrief | Advisory | Coach feedback + downloadable PDF report |

| Panel Verdict | Auto-trigger | Panel announces result (Pitch Competition tier only) |

Tier-Conditional Skipping

3 tiers — Pitch Competition, Angel Round, Series A — produce meaningfully different session experiences. Phase rules live in a data dict — advance_phase() loops until it finds a valid phase for the current tier.

Tier-Differentiated Behaviour

Each agent has tier-specific behaviour defined in markdown skill files. Pitch Competition is forgiving; Series A demands precision. Same agents, different personalities per tier — no code changes.

Phase Fork by Tier

Pitch Competition culminates in a panel verdict. Angel Round and Series A skip the verdict and enter an interactive negotiation phase instead. The session arc adapts to the tier.

“The per-turn pipeline handles what happens within a turn. The phase state machine handles what happens across the session. Both are data-driven, both are tier-aware, and neither requires code changes to reconfigure.”

Agent Architecture

Three Core Methods

respond()Generates the agent's turn output. Routes through Agent Framework when enabled, falls back to direct Azure OpenAI calls. Can invoke registered tools mid-turn.

decide()Autonomous decision-making before each turn. Returns a structured AgentAction: SPEAK, WAIT, COMMIT, EXIT, or INVITE_COLLAB. Not scripted branching — LLM-evaluated.

generate_tells()Produces non-verbal behavioural signals (expressions, micro-reactions) in parallel with the main response — masking latency with useful output.

Agent Tools (20+ total)

| Tool | Type | Purpose |

|---|---|---|

| 3 base tools | Base | Memory recall, observation recording, contradiction detection — available to all agents |

| 8 specialist tools | Specialist | One per agent archetype — domain-specific analysis unique to each agent's expertise |

| 5+ MCP tools | MCP | Session analysis, consistency reports, improvement plans, PDF export, GitHub integration |

Data-Driven Agent Configuration

14 markdown skill files define personality, domain knowledge, and tier behaviour. New agent archetypes require zero code changes — drop in a new skill file and the platform picks it up.

Speaker Resolution

Up to 8 agents decide independently each turn (SPEAK/WAIT/etc.), then a resolution algorithm picks 2 speakers based on interest level and turns since last spoke — with force-speak after 3 silent turns. Genuine multi-agent coordination.

Google A2A Protocol

Private shark deliberation uses Google's Agent-to-Agent (A2A) protocol spec. Agents communicate directly during the Judge Confer phase without user visibility, forming consensus on investment decisions.

Topic Normalisation

79 aliases mapping claim variations to 24 canonical topics. Ensures “revenue”, “annual revenue”, and “yearly income” all resolve to the same claim topic for consistent contradiction detection.

Spatial AI Engine

29-node location graph models the physical environment — a Smallville-inspired spatial awareness system. Agents have location context that influences their dialogue and interactions.

Heuristic Exit Logic

Per-agent personality-driven autonomous exit decisions. Each agent has unique exit thresholds — one exits at low interest after a major inconsistency; another exits after a failed chemistry test. Not scripted; emergent from agent state.

“If you need to redeploy to change agent behaviour, your architecture is wrong. Skill files are the config layer — personality, knowledge, and rules live in markdown, not Python.”

Key Design Decisions

Multi-Model Routing

11 model deployments with purpose-driven routing. Each task type gets the optimal model for cost, latency, and quality.

- GPT-4o-Mini / GPT-5-Mini — Primary reasoning & decisions

- DeepSeek-V3 — Observer synthesis

- Phi-4-Mini / Phi-4-Reasoning — Extraction & evaluation

- Mistral-Small / Grok-3 — Classification & analysis

Qdrant over ChromaDB

ChromaDB works for prototypes. For production multi-agent memory with session scoping and metadata filtering:

- Session-scoped payload filtering

- Persistent named collections

- Per-agent isolation at query time

- Docker-native with volume persistence

asyncio over Celery

This is real-time orchestration, not a job queue. Agents need to respond within a single HTTP request cycle.

- Sub-5s latency for parallel LLM calls

- ContextVar isolation per async task

- No message broker dependency

- Native Python 3.12 async

Feature-Flagged Agent Framework

The platform runs fully without an Azure subscription. Agent Framework integration is toggled with a single flag.

USE_AGENT_FRAMEWORK=true— Full Foundry integrationUSE_AGENT_FRAMEWORK=false— Direct Azure OpenAI calls- Graceful degradation, no code changes

- AF adds observability, not core logic

Infrastructure

nginx SPA

Single-file frontend

FastAPI

Orchestration & governance

Qdrant

Vector DB with persistence

MCP Server

Analysis & PDF reports

GitHub MCP

Repository integration

$ docker compose up # Local development — 5 containers $ azd up # Azure deployment — zero architecture changes

“Zero-to-deployed in one command. That's not marketing — that's the actual developer experience. Same containers, same networking, same volumes. Local and cloud are architecturally identical.”

DevSecOps CI/CD

Here's the thing about hackathon projects — most of them deploy from someone's laptop. I wanted this one to ship like a real product. Infrastructure as code, automated security scanning, no stored credentials, no portal clicking. The kind of pipeline you'd actually trust in production.

Infrastructure as Code — Bicep

7 Bicep modules define everything: Container Apps Environment, ACR, Key Vault with Managed Identity RBAC, Azure Files for vector DB persistence, Application Insights, and a budget alert. Subscription-scoped deployment creates its own resource group with deterministic naming. No portal clicking, no manual resource creation.

Zero-Delta Local-to-Cloud

The same 5 Docker containers that run via docker compose up deploy to Azure Container Apps via azd up. nginx reverse-proxies API, MCP, and static assets identically in both environments. The frontend bakes assets into the container at build time — no volume mounts in cloud, no missing files on deployment.

Dockerfile Hardening

Multi-stage builds strip build tooling from runtime images. All Python services run as non-root. Healthchecks use Python stdlib — no curl installed. nginx adds security headers (X-Frame-Options, X-Content-Type-Options, X-XSS-Protection, Referrer-Policy) and gzip compression.

Secrets & Identity

Key Vault holds API keys with RBAC-scoped access — the API container's system-assigned Managed Identity gets Key Vault Secrets User, nothing else. Secrets never appear in environment variables, config files, or container images.

GitHub Actions Pipeline

Two workflows. The PR gate runs 848+ unit tests on every pull request — nothing merges without passing. The main branch workflow is the interesting one:

Filesystem security scan

848+ tests, mocked LLMs

Remote AMD64 builds

OIDC federation

OIDC federation means no stored credentials in GitHub — no service principal secrets to rotate. Images build remotely in ACR on AMD64, solving the ARM Mac to cloud architecture mismatch without cross-compilation.

$ azd up # 11 Azure resources provisioned, 5 images built,

# security scanned, identity-bound, serving traffic“I've seen too many hackathon projects that work on the presenter's laptop and nowhere else. This one deploys from a GitHub Actions workflow with security scanning, OIDC federation, and zero stored secrets. That's not over-engineering — that's how you show the platform actually works.”

Testing & Production Maturity

I ended up with four levels of testing — each one catches things the others miss.

Unit Tests — Stubbed LLMs

848+ unit tests with mocked LLM calls. Fast, deterministic, run on every commit. Pytest markers separate these from slower integration runs. Covers memory operations, claim extraction, pipeline sequencing, and agent decision logic.

Integration Tests — Real LLMs

60+ integration tests running specific scenarios against real Azure endpoints. These validate actual model behaviour — claim extraction accuracy, inconsistency detection sensitivity, and agent response quality under real latency conditions.

Eval Harness — Playtest Agent

A dedicated playtest agent drives the frontend end-to-end through scripted scenarios. Eval pipeline scores agent responses for consistency, personality adherence, and tool usage. Scenarios accumulate into a growing regression set.

Manual Frontend — LLM-Suggested Test Data

I drive the browser manually, but the LLM suggests what data to enter — edge cases, contradictions, phase transitions. The AI knows what scenarios stress the system; I observe how the frontend handles them.

Azure RAI Content Safety

Solved Azure AI content filter false-positives by redesigning prompt language (avoiding trigger phrases like “MUST”, “ABSOLUTE RULE”) and building slim message construction for Agent Framework threads.

“940 tests across four levels. Each level catches things the others miss — unit tests for logic, integration tests for model behaviour, eval harness for agent quality, and manual testing for the things you can only see by playing.”

“Pitch Perfect started as a hackathon idea and became a production-grade multi-agent platform — 8 sharks, 11 model deployments, 940 tests, and a single-command Azure deployment. The sharks remember everything.”